Your team uses AI every day. Your SPRS score doesn't reflect that.

Your proposal writers use Claude to draft RFP responses. Your engineers ask ChatGPT to summarize test procedures. Your contracts team uses Gemini to cross-reference SOW language. None of this is logged. None of it respects CUI boundaries.

CMMC Phase 1 is already in solicitations. Phase 2 — third-party audits — starts November 2026. When the C3PAO asks "how does your team use AI with controlled information?" you need an answer that isn't "we have a policy document."

Let your team use AI. Control exactly what it sees.

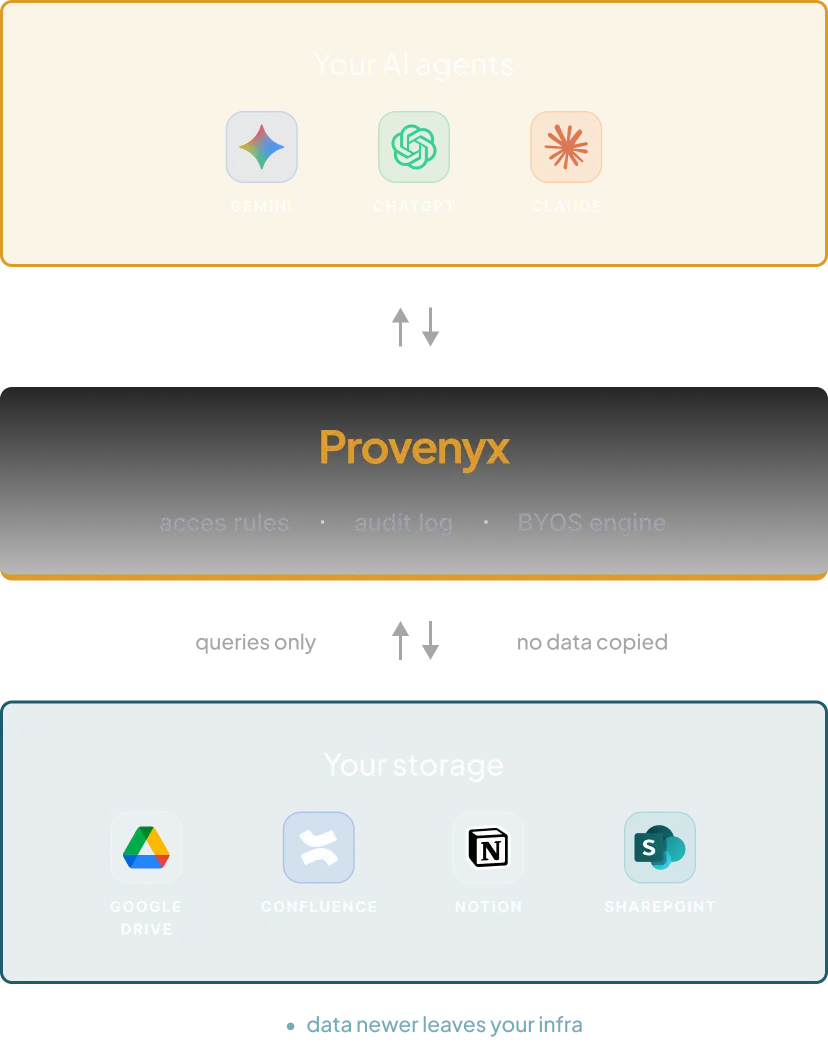

Provenyx sits between AI models and your company's storage. Your team asks questions in plain language — and gets answers only from the documents you've approved.

No data is copied to external servers. No AI model sees anything beyond what you've explicitly allowed. Every interaction is logged and auditable.

- Works with Claude, ChatGPT, and Gemini simultaneously

- Setup in minutes — no IT department needed

- Export audit logs for CMMC assessments, NIST 800-171 reviews, or prime contractor audits

Three things happening at your company right now

"I pasted the performance narrative from the NAVAIR program into Claude to help draft the new proposal section. Was that CUI? I honestly don't know."

Your proposal writer needed context fast. The controlled content was in the same Drive folder as the approved boilerplate. No boundary enforcement. No log. If it was CUI, you now have a spillage event you can't even trace.

"The subcontractor asked us to prove our AI usage is CMMC-compliant. I don't even know where to start."

Primes are already auditing their supply chain. Lockheed, Northrop, Raytheon — they're not waiting for Phase 4. You need to show controlled AI usage, not just a written policy. You have no artifacts to show.

"Our IT guy set up a ChatGPT Enterprise account. He said it's secure. But nobody controls what files people upload into it."

ChatGPT Enterprise encrypts data in transit. Great. But it doesn't know which files are CUI-marked, which user has what clearance level, or which content should never leave your storage boundary. That's not AI governance — that's a paid chat subscription.

Three steps to controlled AI access

Connect your storage

Link your existing storage — Google Drive, Notion, Confluence, SharePoint. Your data stays where it is.

Set access rules

Define which folders and documents each AI model can see. Control access at the file level — not just by user role.

Your team asks, AI answers

Employees ask questions in natural language. They get answers only from approved sources. Every query is logged.

What your team can safely ask — and what stays controlled

New RFP dropped Friday. The proposal team needs to reference past performance narratives and technical volumes — fast.

AI pulls from approved boilerplate and past performance volumes — but never touches CUI-marked technical data, source selection documents, or budget actuals. The proposal writer gets the context they need. The ISSO doesn't get a spillage report.

- Past Performance — NAVAIR ISR (Approved).pdf

- Technical Approach Boilerplate v4.docx

- NAVAIR ISR — Technical Volume (CUI).pdf

- Budget Actuals — NAVAIR FY25.xlsx

Prime contractor sent a new SOW. Your contracts team needs to check it against existing obligations and standard terms.

AI references your approved contract templates and DFARS flow-down clauses — but has no access to active pricing schedules, subcontractor rates, or negotiation notes. The contracts manager gets a clause-by-clause comparison. Sensitive commercial terms stay invisible.

- Standard DFARS Flow-Down Clauses.docx

- Northwell SOW — Draft v2.pdf

- Subcontractor Rate Cards FY26.xlsx

- Negotiation Notes — Northwell.docx

Customer requested an updated test summary for the CDR package. The engineer needs to synthesize across multiple test reports.

AI pulls from approved test reports that have been cleared for the CDR package — but can't see raw test data, failure analysis reports marked CUI, or anything from adjacent programs. The engineer gets a clean summary. Program boundaries stay intact.

- ATP-001 Acceptance Test Report.pdf

- ATP-004 Final Test Summary.pdf

- Failure Analysis — Block 2 Radar (CUI).pdf

- Adjacent Program — EW Module Test Data.pdf